Explainable AI

in Financial

Services

A comprehensive, research-backed guide to XAI, Causal AI, Mechanistic Interpretability, and Neurosymbolic AI - and how they are transforming transparency, trust, and regulatory compliance across capital markets, lending, AML, risk management, and beyond.

Understanding XAI

Before applying XAI in financial services, it is essential to understand what it is, how it differs from Interpretable AI, and how it relates to - but is distinct from - AI Observability. These distinctions have direct implications for regulatory compliance and model governance.

Explainable AI (XAI)

A set of methods and techniques that make the outputs of AI systems understandable and interpretable to human users. XAI focuses on answering *why* a model made a specific decision, applied to any model - including black-box systems - typically after training.

When to Use XAI

XAI vs. Interpretable AI - Key Distinctions

| Dimension | Interpretable AI | Explainable AI (XAI) |

|---|---|---|

| Model Type | White-box / Glass-box | Any model, including black-boxes |

| When Applied | Ante-hoc (by design) | Post-hoc (after training) |

| Accuracy Trade-off | Often lower accuracy | Full model accuracy preserved |

| Explanation Quality | Exact, complete | Approximate, local or global |

| Regulatory Fit | Preferred for high-stakes | Increasingly accepted with validation |

XAI Classification Framework

XAI techniques are classified along three key dimensions: when explanations are generated (ante-hoc vs. post-hoc), their scope (global vs. local), and whether they are model-agnostic or model-specific. Understanding this taxonomy is essential for selecting the right technique for each financial use case.

Ante-hoc (Intrinsic)

Explainability built into the model design before or during training. The model architecture itself is the explanation.

Post-hoc

Explanation methods applied after model training to any model, including complex black-boxes. Generates approximations of model behavior.

Beyond XAI: Derived Fields

XAI has spawned several specialized fields that extend its capabilities in different directions. Causal AI adds causal reasoning, Neurosymbolic AI adds logical inference, Mechanistic Interpretability reverse-engineers neural networks, and Evaluative AI provides systematic auditing frameworks.

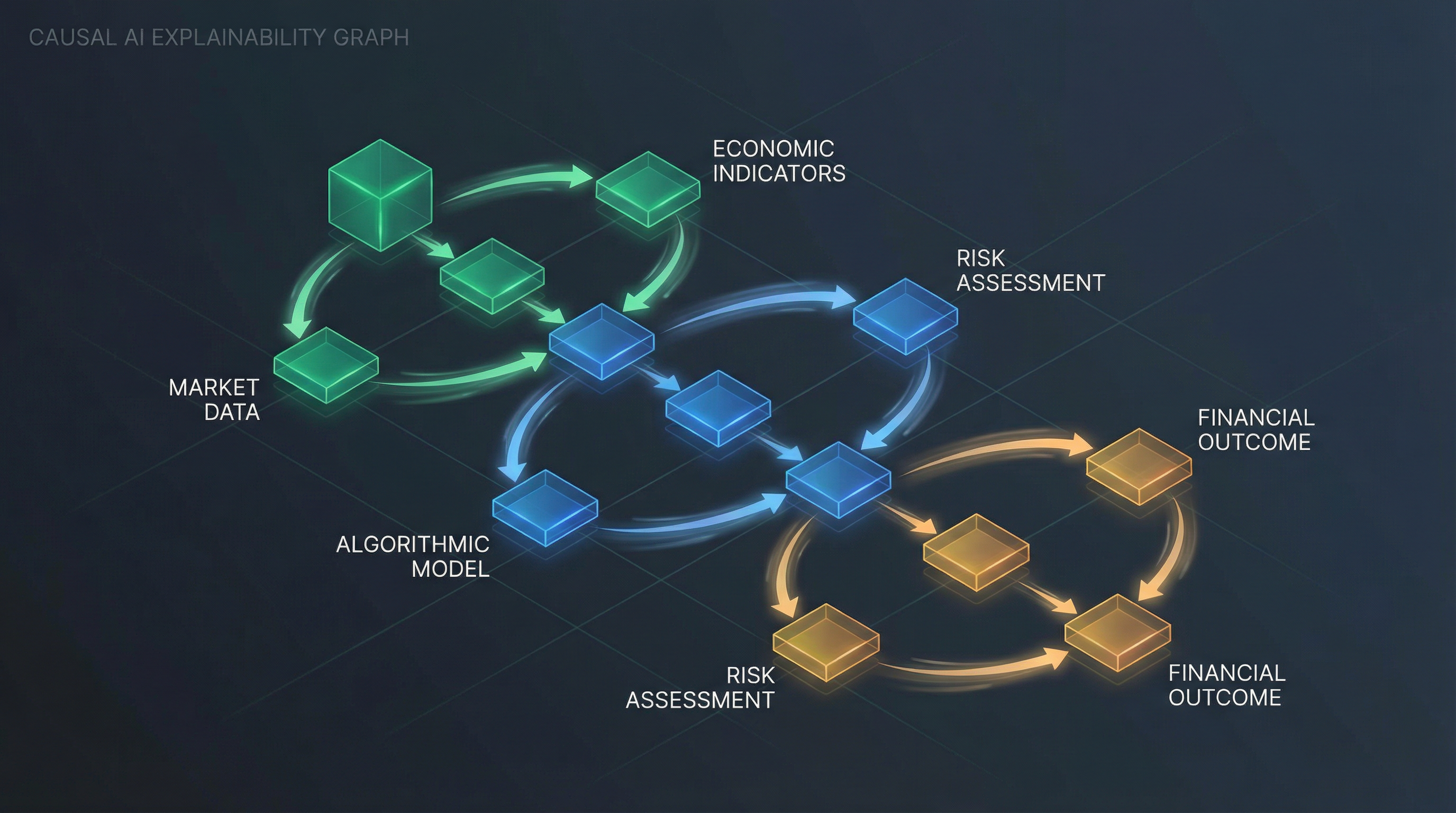

Causal AI: Directed acyclic graphs (DAGs) model cause-and-effect relationships in financial systems

Causal AI extends beyond statistical correlation to identify true cause-and-effect relationships. While standard ML models learn 'what tends to happen together,' causal models learn 'what causes what' - enabling counterfactual reasoning and policy simulation.

XAI by Financial Domain

Each area of financial services uses different AI models and requires different XAI techniques. Select a domain to explore the specific algorithms deployed, the explainability methods applied, and the academic studies backing each approach.

Capital Markets & Trading

Capital markets AI encompasses algorithmic trading, price prediction, portfolio optimization, and market microstructure analysis. These systems operate at microsecond speeds, requiring explainability for regulatory compliance, risk management, and strategy validation.

| AI Model / Algorithm | Purpose in Capital Markets | XAI Technique Applied |

|---|---|---|

| LSTM / Temporal Fusion Transformer | Time series price prediction and multi-horizon forecasting | Attention mechanisms, temporal SHAP |

| Reinforcement Learning (RL) | Algorithmic trading strategy optimization | Policy gradient visualization, reward attribution |

| Random Forest / XGBoost | Signal generation, factor investing | TreeSHAP, feature importance |

| Graph Neural Networks (GNNs) | Market microstructure, order flow analysis | GNNExplainer, attention weights |

| Transformer Models | Multi-modal market data fusion, news sentiment | Attention visualization, SHAP |

| Autoencoders | Anomaly detection, regime change identification | Reconstruction error attribution |

Core XAI Techniques

A deep dive into the most widely used XAI techniques in financial services - how they work, when to use them, their strengths and limitations, and the foundational research behind each.

SHAP assigns each feature a contribution value (Shapley value) for a specific prediction, based on cooperative game theory. It is the most widely used XAI technique in financial services due to its theoretical soundness, consistency, and ability to explain any model.

Global Regulatory Landscape

Regulators worldwide are requiring financial institutions to explain how their AI systems reach decisions. From the EU AI Act to US ECOA guidance, the regulatory pressure for explainable AI in finance is accelerating. Understanding these requirements is essential for compliance and competitive positioning.

The world's first comprehensive AI regulation. Applies a risk-based approach classifying AI systems into four risk tiers. Credit scoring, insurance underwriting, and AML systems are classified as High-Risk AI, requiring mandatory explainability, human oversight, and documentation.

Academic Research Library

A curated collection of peer-reviewed studies, regulatory reports, and industry analyses on XAI in financial services. All entries include direct links to source materials. Filter by domain or technique to find relevant research.

Comprehensive systematic review of XAI applications across financial services, covering credit scoring, fraud detection, trading, and risk management. Identifies dominant technique...

CFA Institute report examining how XAI addresses the needs of different stakeholders in finance - regulators, risk managers, clients, and model validators. Provides practical imple...

BIS Financial Stability Institute analysis of global regulatory approaches to AI explainability in financial services. Provides comparative framework and practical guidance for fin...

Pioneering application of mechanistic interpretability to LLMs in financial services. Analyzes GPT-2 attention patterns for Fair Lending law compliance, identifying circuits respon...

Comprehensive survey of XAI approaches for financial time series prediction (2018-2024). Categorizes attention mechanisms, SHAP variants, and counterfactual methods for trading and...

First study using interpretable ML to reveal HFT trading dynamics. Designs AI trading algorithms by reusing trading markers identified during explainable trading dynamics discovery...

Comparative study of SHAP and LIME for credit scoring interpretability. Evaluates discriminative power and regulatory compliance of post-hoc explanation methods on real lending dat...

Multi-market evaluation of SHAP-calibrated gradient boosting ensembles (XGBoost, LightGBM) for credit scoring. Demonstrates how SHAP calibration improves both accuracy and explaina...

Seminal paper proposing an XAI system for automated loan underwriting. Combines gradient boosting with SHAP explanations to provide loan officers with interpretable decision suppor...

Novel GNN-XAI framework for detecting complex multi-layered money laundering networks. Integrates GNNExplainer to provide subgraph-level explanations for AML alerts.

Combines SHAP with deep learning for AML, addressing both explainability and fairness. Demonstrates how SHAP explanations reduce false positive rates by enabling investigators to v...

Novel ensemble architecture combining CNN, RNN, and GNN with attention-based gating for fraud detection. Achieves state-of-the-art performance with built-in explainability through ...

Comprehensive survey of XAI methods in insurance. Finds SHAP and LIME most prevalent in claims management, underwriting, and actuarial pricing. Identifies simplification methods as...

Introduces AI-enhanced ESG framework integrating XAI and multi-criteria analysis. Demonstrates how SHAP explanations improve ESG score transparency and reduce greenwashing risk.

Comprehensive analysis of mechanistic interpretability applied to LLMs in financial services. Covers circuit discovery, attention head analysis, and automated interpretability for ...

Policy analysis examining the intersection of explainability and fairness in ML-based credit underwriting. Provides regulatory guidance on implementing XAI for ECOA compliance.